- Optical Products

The Evolution of ADAS and the Future of Autonomous Driving — Sensing Technologies Supported by Dexerials

目次

- 1The evolution of autonomous driving and the rise of CASE

- 2What is ADAS? — Advanced driver assistance systems supporting autonomous driving

- 3Classification of autonomous driving levels (Levels 0–5)

- 4Latest trends in Level 4 and Level 5 autonomous driving

- 5Expansion of the ADAS market and current adoption trends

- 6High-precision sensing in ADAS supported by sensor fusion

- 7The role and configuration of ADAS cameras

- 8Latest trends in ADAS camera technology

- 9Dexerials' material technologies supporting ADAS camera module design

- 10Total solutions for optical noise and optical axis misalignment

- 11Dexerials' initiatives for the autonomous driving era

The evolution of autonomous driving and the rise of CASE

The automotive industry is currently undergoing a wave of technological innovation centered on four key concepts collectively known as CASE. CASE is an acronym for "connected," "autonomous driving," "shared/services," and "electric," and these innovations are expected to reshape the future of mobility.

The elements of CASE

- 1. Connected: Vehicles are continuously connected to the internet, other vehicles, and infrastructure, enabling real-time information sharing and the provision of advanced services.

- Autonomous driving: Technologies that enable vehicles to drive autonomously by leveraging artificial intelligence and sensor technologies. These are expected to reduce traffic accidents and improve transportation efficiency.

- Shared&Services: New mobility services such as car sharing and ride sharing, where vehicles are shared among users.

- Electric: The growing adoption of electric vehicles (EVs) and hybrid vehicles (HVs) to reduce environmental impact.

This article focuses on autonomous driving, the second pillar of CASE, and explains advanced driver assistance systems (ADAS) and the critical role sensors play within them.

What is ADAS? — Advanced driver assistance systems supporting autonomous driving

ADAS, short for advanced driver assistance systems, refers to systems designed to assist drivers and improve safety and comfort as the industry moves toward autonomous driving. Typical ADAS functions include:

- Lane departure warning (LDW)

- Automatic emergency braking (AEB)

- Adaptive cruise control (ACC)

- Parking assistance systems

These functions are regarded as essential technological foundations for achieving autonomous driving.

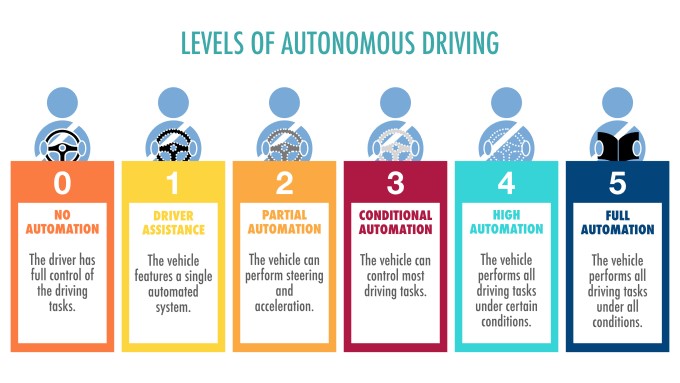

Classification of autonomous driving levels (Levels 0–5)

Autonomous driving is internationally classified into six levels (0 through 5):

| Level 0 (No automation) | The driver performs all driving tasks. |

| Level 1 (Driver assistance) | The vehicle assists with either steering or acceleration/braking. |

| Level 2 (Partial automation) | The vehicle controls both steering and acceleration/braking, but driver supervision is required. |

| Level 3 (Conditional automation) | The system performs driving tasks under specific conditions, but the driver must intervene when requested. |

| Level 4 (High automation) | The vehicle performs fully autonomous driving under specific conditions without driver intervention. |

| Level 5 (Full automation) | The system performs all driving tasks in all conditions, eliminating the need for a steering wheel or pedals. |

Currently, Level 2 autonomous driving functions are widely adopted in newly released vehicles around the world. In particular, ADAS features are increasingly becoming standard equipment in Europe and Japan. Meanwhile, Level 3 and higher technologies require further regulatory development and safety validation, and their deployment remains limited.

For example, a Japanese automaker launched a Level 3-equipped vehicle in 2021, but sales were limited to just 100 units. European automakers have also introduced Level 3 systems in selected markets within certain countries.

Latest trends in Level 4 and Level 5 autonomous driving

At Level 4, robotaxis (fully driverless taxis) are being introduced limited to certain geographic areas, with continuing efforts toward commercialization.

- Autonomous driving services in North America: Robotaxi services are being deployed in certain areas of California, Arizona, Texas, and Nevada in the United States (although one North American company has already announced its withdrawal).

- Autonomous driving services in China: Autonomous taxis are undergoing pilot operations in Beijing and Shanghai.

However, full autonomous driving on public roads remains subject to significant legal constraints, and commercialization requires a cautious approach. Discussions on legal regulations surrounding autonomous driving are ongoing in various countries. In particular, determining liability when a Level 4 or higher vehicle is involved in an accident remains a key issue, and regulatory responses to Level 4 deployment differ by country.

Meanwhile, driverless operation in limited areas or for specific uses such as logistics and delivery with commercial vehicles is expected to improve business efficiency and profitability. In North America, for example, a major autonomous driving system company has recently partnered with a ride-hailing platform provider to launch new services.

Expansion of the ADAS market and current adoption trends

According to survey data from 2022, the global installed base of ADAS and autonomous driving systems reached approximately 40 million vehicles as of 2021. Since then, driver assistance functions have increasingly become standard equipment in new vehicles sold across many countries, and ADAS penetration continues to rise year by year. In particular, ongoing functional enhancements at Level 2 and Level 2+ are expected to drive further market growth. (Reference: "Global market for autonomous vehicles to reach 79 million units by 2030—double the 2021 results," Yano Research Institute forecast, Automobile Business & Culture Association of Japan)

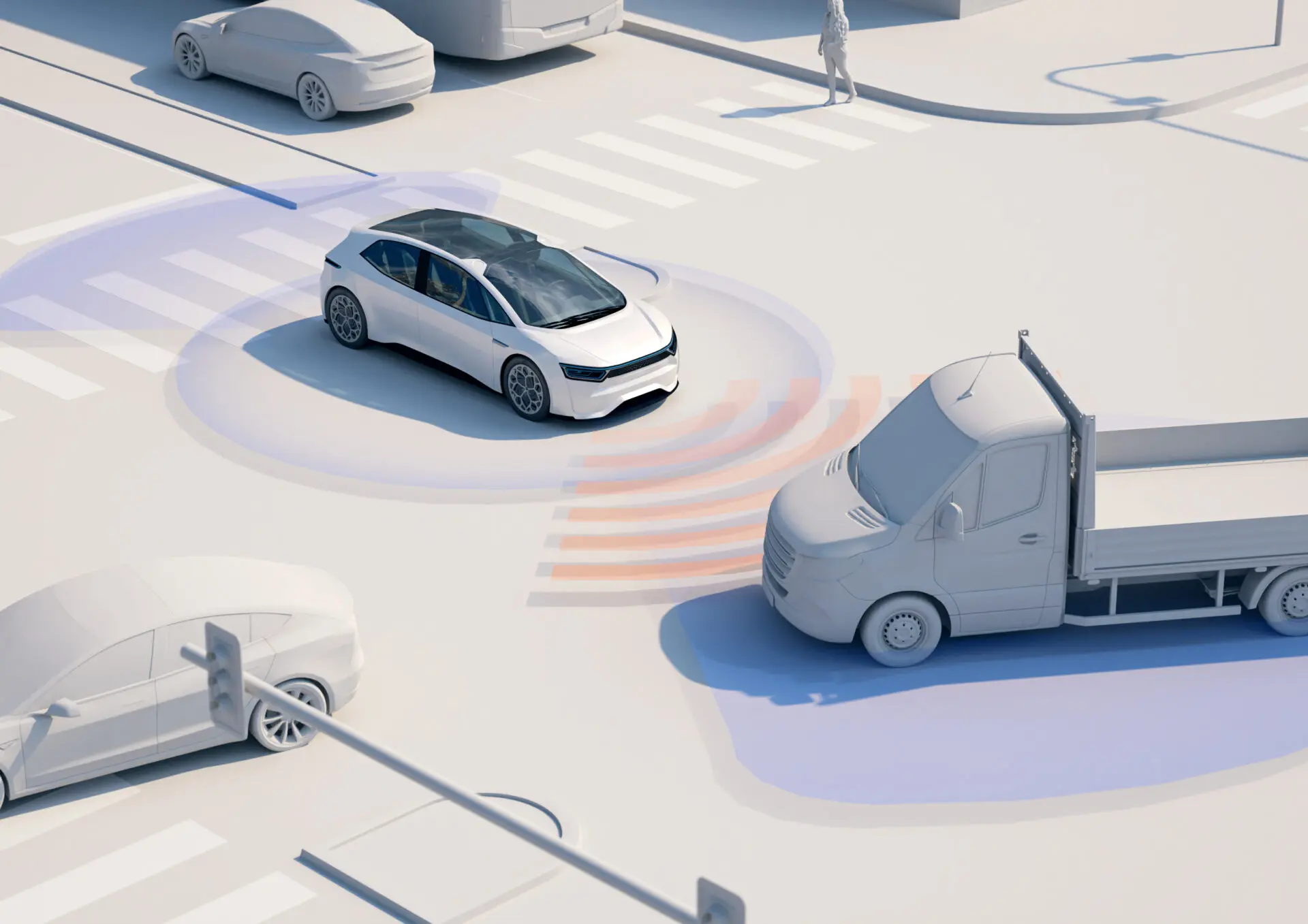

High-precision sensing in ADAS supported by sensor fusion

Various sensors capable of accurately detecting the vehicle's surroundings are indispensable for ADAS functions, which are at the core of autonomous driving. Currently, the following technologies are primarily used:

Major sensors

- Cameras: Recognize lane markings, traffic signs, and detect pedestrians from image data

- Radar: Uses electromagnetic waves to measure relative vehicle speed and distance

- LiDAR: Enables 3D mapping and highly accurate object detection using laser technology

- Ultrasonic sensors: Detect nearby obstacles using ultrasonic waves

Each of these sensors has its own strengths and weaknesses. By combining multiple sensors with different characteristics, vehicles can achieve more accurate recognition of their surroundings. Safe autonomous driving must function not only in clear daytime conditions but also in rain, snow, fog, and at night. To achieve this, a key technology is sensor fusion, which integrates data from multiple sensors to enable more precise environmental perception.

By advancing sensor fusion, the weaknesses of individual sensors can be compensated for, enabling safer and more reliable driver assistance. Additionally, to address harsh weather conditions such as heavy rain or snow, infrastructure-based solutions are also being explored, such as transmitting radio signals from traffic signals or roadside systems to support vehicle sensing.

As examples of sensor fusion in practice: A European automaker's vehicle announced in 2018 was equipped with 6 cameras, 5 millimeter-wave radars, 1 LiDAR unit, and 12 ultrasonic sensors. Vehicles used in autonomous driving services in North America are equipped with 16 cameras, 21 millimeter-wave radars, and 5 LiDAR units. A model released by a Japanese automaker in 2021 was equipped with 2 front sensor cameras, 5 radar sensors, 12 sonar sensors, and 5 LiDAR units. As these examples demonstrate, increasing the number of sensors enhances sensing capabilities. However, as sensor performance improves and sensor counts increase, rising vehicle costs have become a significant challenge.

The role and configuration of ADAS cameras

Cameras that recognize visual information from the external environment play a particularly important role in ADAS. Recently, systems incorporating multiple cameras into a single module, referred to as dual-camera and triple-camera systems, have been increasingly adopted.

- Dual-camera systems: By using two cameras, stereoscopic vision becomes possible, improving the accuracy of distance measurement to objects. This enables more precise collision avoidance and pedestrian detection.

- Triple-camera systems: By using three cameras, wider-area and higher-precision image recognition can be achieved. For example, by combining cameras with different focal lengths, objects at both short and long distances can be accurately recognized simultaneously.

Camera systems are typically installed near the upper portion of the windshield and monitor a wide area in the vehicle's forward direction. This enables various driving support functions such as lane-keeping assistance and traffic sign recognition.

Latest trends in ADAS camera technology

As ADAS continues to evolve, further advances in camera technology are expected. In particular, the following technical requirements are increasingly important:

- Higher resolution: Adoption of high-resolution cameras is progressing in order to accurately recognize distant obstacles and traffic signs.

- Nighttime and adverse weather performance: Development of camera technologies capable of stable operation under low-light conditions, rain, fog, and other challenging environments is essential.

- Integration with AI: Image processing technologies utilizing deep learning enable more advanced object recognition.

- Lower power consumption: As electrification advances, technologies that reduce the power consumption of camera systems become increasingly required.

In sensing applications, there is growing demand in particular for the suppression of noise induced by stray light (unwanted reflected light). Dexerials is addressing this need through the following measures:

Material technologies for optical noise reduction

- High-transparency treatments compatible with wavelengths corresponding to specific sensor types (e.g., moth-eye structures)

- Development of anti-fog materials to address fogging of the windshield located in front of the sensor

- Development of low-reflection resins that suppress stray light and other unwanted light reaching sensing cameras and LiDAR sensors

These technologies improve the overall S/N ratio of ADAS systems by suppressing noise (N) caused by external light disturbances relative to the signals (S) that sensors are intended to detect.

Dexerials' material technologies supporting ADAS camera module design

In ADAS camera systems increasingly adopted in recent years, rigid-flex printed circuit boards (rigid FPCs) are used to connect the camera board and the main board. Traditionally, achieving both cost efficiency and reliability in rigid FPC connections has been challenging. However, by connecting FPCs to boards using Dexerials' ACF, configurations that ensure reliability while reducing costs have been adopted by many European automotive manufacturers. One specific example is the use of the CP881AM series, an anisotropic conductive film (ACF) for automotive "film On board" applications that has obtained IATF certification. Customers adopting this product have achieved both high reliability and reduced costs.

In addition, Dexerials' precision fixing adhesives are used for securing camera lenses and bonding optical components, and further adoption is expected to expand in the future.

Total solutions for optical noise and optical axis misalignment

Dexerials' strength lies not only in material supply, but in providing a total solution for addressing the issues of optical axis misalignment and optical noise in sensing devices such as in-vehicle cameras and LiDAR sensors. These challenges are directly linked to product quality and safety in mass production. Specifically, in addition to developing and supplying materials for precision camera fixation, we also provide evaluation equipment to measure optical axis misalignment, as well as optimization support at the design stage. Rather than simply selling materials, we contribute to achieving the sensing performance our customers require through comprehensive support spanning evaluation to design assistance.

In recent years, automotive cameras have continued to increase in resolution, and tolerance levels for optical axis misalignment have become stricter each year. Compared with conventional materials, Dexerials' products have demonstrated advantages in both the Z-axis and tilt-axis directions. Building on our track record of adoption in Japan, we plan to expand deployment sequentially across Asia, Europe, the United States, and ASEAN markets.

Furthermore, we have independently developed a black coating technology designed to suppress infrared reflective stray light generated inside cameras and LiDAR systems. In autonomous driving and ADAS applications, infrared light at 905 nm and 1550 nm is used for detection. However, while conventional black resins can absorb visible light, they tend to reflect infrared wavelengths, which can degrade sensing accuracy. To address this, we developed a new coating resin that achieves extremely low reflectance in the infrared region through a three-layer mechanism of absorption, internal scattering, and external scattering. Comparative testing against automotive-grade materials used by other companies has confirmed its superior performance.

Through a combination of materials and expertise, we aim to solve two critical factors that affect sensing quality: preventing camera components from "shifting" and preventing reflective stray light from "intruding" as noise. Together with automotive manufacturers, we will continue to develop core technologies for the era of autonomous driving.

Dexerials' initiatives for the autonomous driving era

The elements of CASE are interconnected and collectively shape the future of the automotive industry. In particular, the evolution of ADAS represents a key step toward realizing autonomous driving technology, with more and more people interested in its future. While technological development in autonomous driving is progressing rapidly, regulatory frameworks and social acceptance will be critical factors going forward.

In step with the advancement of autonomous driving and ADAS, Dexerials will continue to expand its portfolio of highly reliable solutions centered on materials technologies. We remain committed to further enhancing sensing performance in the years ahead.

Related articles

Dexerials are a materials manufacturer that produces materials essential for the evolution of devices and next-generation solutions.

We will create new value with partners around the world in areas including electronic components, bonding materials, and optical materials.

- Share

We provide materials on our products and manufacturing technologies.

They can be downloaded for free.

Download Materials

We provide materials on our products and manufacturing technologies.

They can be downloaded for free.

Download Materials